Voice agents will not completely fail, but they will not succeed without strong language infrastructure. Voice AI can handle simple queries, automation, and basic conversations, but it still struggles with real human language like accents, emotions, and mixed languages. The future is not just voice automation, it is voice AI that truly understands people.

Voice agents fail because they depend on technologies like NLP, ASR, and NLU, but without strong language infrastructure, these systems cannot process real conversations. They struggle with multilingual speech, code-switching, and natural human expressions, leading to wrong responses and poor user experience.

In 2026, businesses using voice AI are seeing better results only when they invest in strong language infrastructure. This helps improve accuracy, reduce errors, and deliver more natural conversations. The real advantage is not just automation it is building voice agents that understand users clearly and communicate like humans.

What is Language Infrastructure in Voice AI?

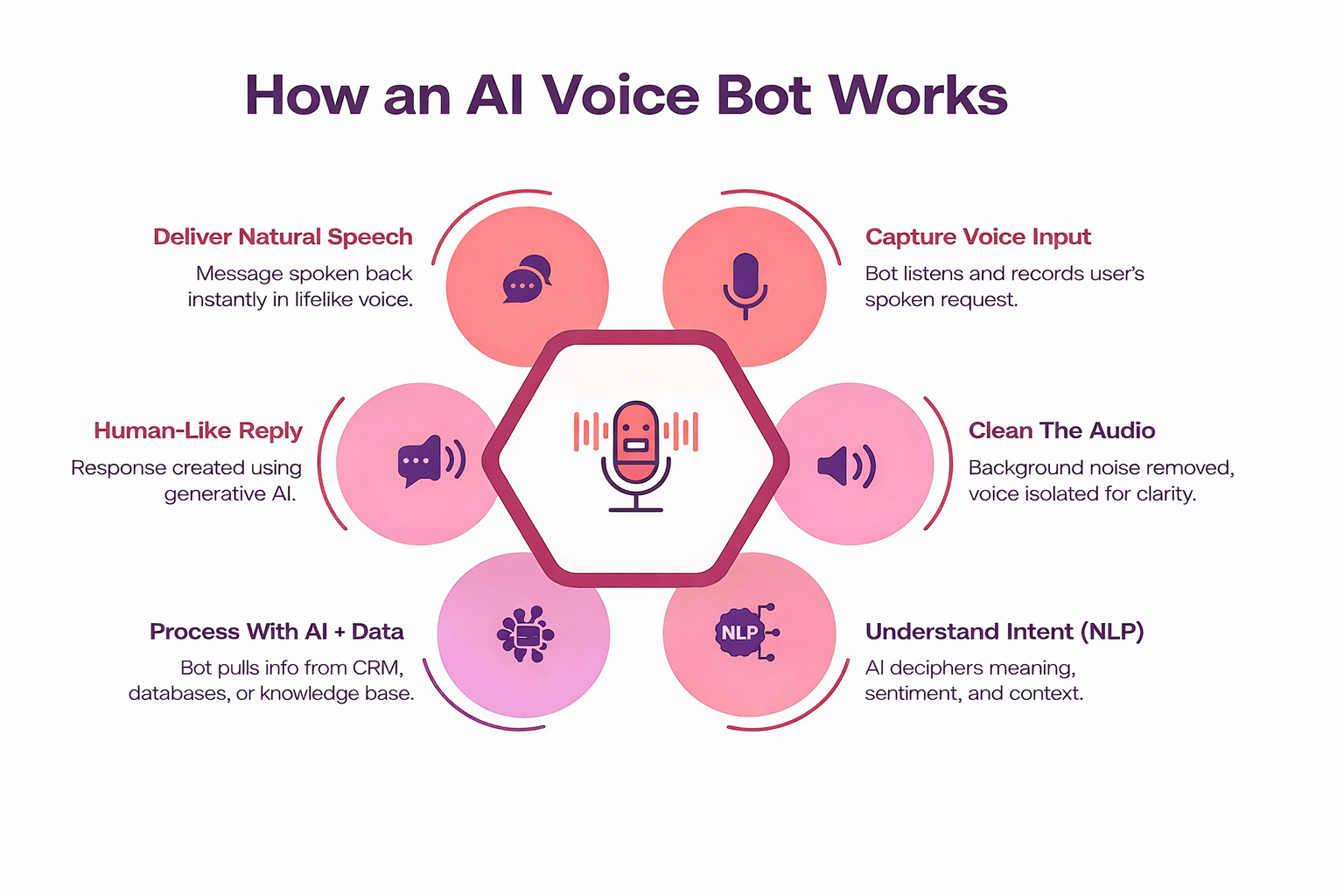

Language infrastructure is the foundation that helps voice bots understand and respond to human speech correctly.

It includes:

- Automatic Speech Recognition (ASR) -Converts speech to text

- Natural Language Processing (NLP) -Understands meaning and intent

- Natural Language Understanding (NLU) -Extracts user intent

- Machine Translation (MT) -Supports multiple languages

- Text-to-Speech (TTS) -Converts text back to voice

Language infrastructure is what makes a voice bot understand like a human, not just hear like a machine.

Why Voice Bots Fail Without Strong Language Infrastructure

Here’s a closer look at the key reasons why voice bots fail without strong language infrastructure and struggle to deliver accurate, human-like conversations.

1. They Don’t Understand Real Human Speech

Most voice bots are trained on clean, structured language. But real users speak differently:

- Use slang and informal words

- Mix languages (code-switching)

- Speak with accents and dialects

Studies show that AI systems struggle with accents and dialect diversity, especially in countries like India.

Result:

The bot misunderstands users even when the request is simple.

2. Over-Reliance on English

Many voice bots are designed for English-only interactions.

But in real life:

- Users switch between languages mid-sentence

- Regional languages dominate in customer service

- Users feel more comfortable in native languages

This creates a huge gap between how users speak and what bots understand.

Example:

A user may start in English but switch to Hindi or Marathi for clarity.

Result:

The bot fails silently, and the user disconnects.

3. Poor Handling of Multilingual and Code-Switching

This leads to confusion as the bot cannot understand mixed-language input correctly. As a result, it misses user intent and gives inaccurate responses.

In multilingual markets:

- People mix languages naturally

- Context changes with language

- Meaning depends on cultural usage

AI models often struggle with this because of:

- Limited multilingual datasets

- Lack of contextual training

- Fragmented language resources

Result:

The bot hears words but misses the meaning.

4. Weak Natural Language Understanding (NLU)

This limits the bot’s ability to handle real, dynamic conversations beyond fixed scripts. As a result, users receive repetitive or incorrect responses, leading to frustration.

Many bots rely on:

- Predefined scripts

- Limited intents

- Keyword matching

But real conversations are unpredictable.

Research shows scripted bots fail when users go off-script or ask complex questions.

Result:

- Repetitive responses

- Wrong answers

- Frustrated users

5. Lack of Real-World Training Data

This causes voice bots to perform poorly in real situations where noise, interruptions, and emotions exist. As a result, accuracy drops and conversations feel unreliable.

Voice bots often fail because they are trained on:

- Clean datasets

- Controlled environments

- Limited speech samples

But real-world conditions include:

- Background noise

- Interruptions

- Emotional tone

Without diverse training data, accuracy drops significantly.

6. Accent and Dialect Challenges

Voice bots struggle to understand different accents and regional speech patterns. This leads to understanding even when users speak clearly.

Most ASR systems are biased toward standard English accents.

But users speak in:

- Regional accents

- Mixed pronunciations

- Local dialects

Result:

Even correct sentences are misunderstood.

7. Language Debt Builds Over Time

Ignoring language infrastructure early creates long-term technical issues and complexity. Over time, the system becomes harder to scale and maintain.

When companies ignore language infrastructure early, they create language debt:

- Constant bug fixes

- Increasing complexity

- Poor scalability

Instead of improving, the system becomes harder to maintain.

8. Loss of User Trust

When bots give wrong answers or sound unnatural, users quickly lose confidence. Once trust is lost, users stop interacting with the system.

If a bot:

- Mispronounces names

- Gives wrong answers

- Sounds unnatural

Users lose trust immediately.

And once trust is lost, users stop using the system.

Role of NLP, ASR, and Multilingual AI in Success

Strong voice systems depend on:

Advanced NLP

- Understands intent, not just words

- Handles complex queries

Robust ASR

- Works with accents and noise

- Learns from real speech patterns

Multilingual AI

- Supports multiple languages seamlessly

- Handles code-switching

Together, they create a human-like conversational experience.

E-E-A-T Perspective (Experience, Expertise, Authority, Trust)

Experience

Real-world deployments show that voice bots fail more in production than in demos.

Expertise

Building voice AI requires deep expertise in:

- Linguistics

- Machine learning

- Regional language behavior

Authority

Industry research (AI benchmarks, enterprise studies) confirms that language gaps are a major limitation.

Trust

Users trust systems that:

- Understand them correctly

- Speak naturally

- Respect their language

How to Build Strong Language Infrastructure

Here’s a closer look at the key steps to build strong language infrastructure for accurate and scalable voice AI systems.

Train on Real Conversational Data

Use real speech samples instead of only written text to improve accuracy in real-world conversations.

Support Multilingual Input Natively

Build systems that understand multiple languages from the start, not as an add-on feature.

Handle Code-Switching

Allow users to switch languages naturally within the same conversation without errors.

Continuously Improve Models

Use real user interactions to regularly retrain and improve system performance.

Focus on Context, Not Just Words

Understand meaning based on context, tone, and situation, not just individual words.

Conclusion

Voice agents in 2026 use advanced technologies like NLP, ASR, NLU, and Machine Learning to automate and improve conversations. But without strong language infrastructure, they cannot understand real human speech, multilingual input, or context accurately.

Modern voice AI systems improve over time using real data, becoming more natural, context-aware, and efficient. Strong language infrastructure ensures better accuracy, smoother conversations, and scalable performance across industries.

The future of voice AI is not just automation it is clear, human-like communication.

Ready to build smarter voice agents? Invest in strong language infrastructure to deliver faster, more accurate, and personalized user experiences.

FAQs

-

Krishna Handge

WOWinfotech

Apr 18,2026

.jpg)